BUILD YOUR SHIPS.

GATHER YOUR CREW.

EXPLORE THE GALAXY IN SEARCH OF COFFEE.

ARGUE WITH ROBOTS.

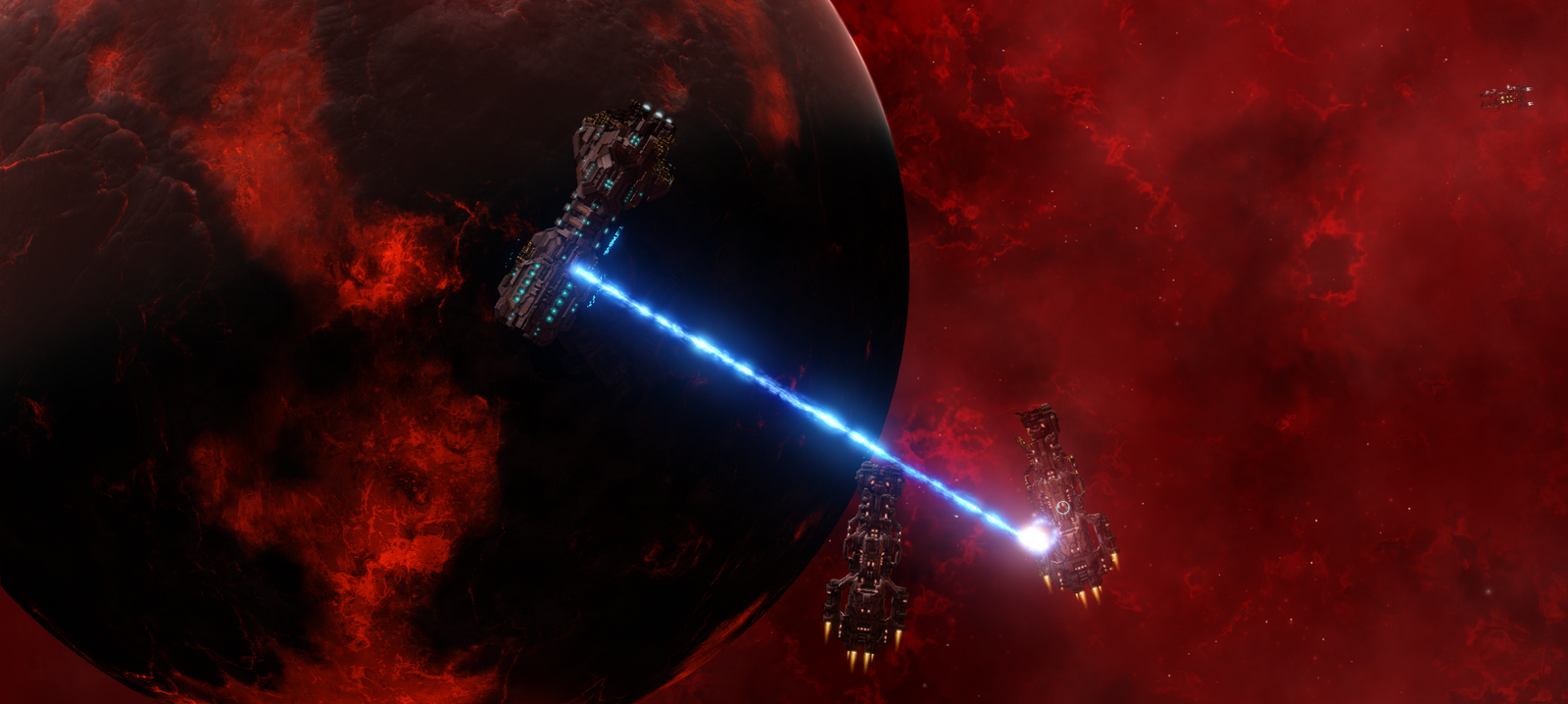

Every pixel of the ship is destructible. Reactors explode when damaged, ships break in real time with pieces flying off and entire ships being cut in half. Be careful that while repairing your engines, enemy gunfire doesn't separate you from the front half of your ship.

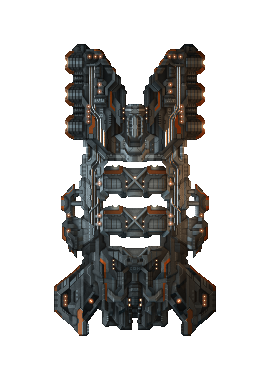

Excavator

Class: Mining Barge

Manufacturer: Goliath Industries

With inbuilt refineries and large cargo space the Excavator became a favorite ship of rich and successful miners. Often equipped with a mining anchor for additional ore yield.

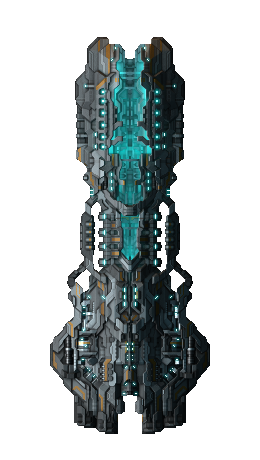

Halberd

Class: Battleship

Manufacturer: SSC

Top of the line military production from Seven Star Coalition. Using frontal cone heavy beam emitters as its main weaponry further supported by a set of heavy turrets.

Overseer

Class: Black-ops Battleship

Manufacturer: Shavala Corp.

Equipped with a bleeding-edge cloaking generator and a singularity generator capable of disrupting movement of entire fleets, the Overseer dictates the flow of battle.

Avorsus

Class: Unkown

Manufacturer: Unknown.

Ship of unknown origin reportedly spotted at outer rings of newly colonized systems.